- Blog

- Java Serialization Vs Protocol Buffers Documentation

- Tinychat Bot Spamming

- Crear Una Carpeta Compartida Por Wifi Thermostat

- Bsnl Broadband Username Password Hack

- Black Negative Aids Tattoo

- Aaj Kal Mere Pyar Ke Charche Versi Rock Melayu Mp3

- Photoshop Cs6 Deutsche Sprachdatei Itunes Gift

- Navionics Navplanner2 From Fugawis

- Download Lirik Lagu Sheila On 2 Bila Esok

- Duamp3 Download

- Contoh Soal Psikotest Dan Jawabannya 2013 Nfl

- Manual Biocontrol Agents Pdf Download

- Umdatul Ahkam Syaikh Abdul Ghani Al Maqdisi Terjemahan Pdf

- Rainbowssd5 39 2 Exe Wheels

- Escala Desarrollo Psicomotor Brunet Lezyne Pdfescape

- Henry Tempo 6n2 Manual Muscle

- Gross Beat Keygen Download

- Wii U Transfer Tool Wad Shop

- Nove E Meia Semanas De Amor Dublado

- Detective Conan Sub Indo Episode Terakhir

- Keygen Serial Visualgdb Python

- Microsoft Access Ole Object Field

- Download Game 3d Untuk Laptop Layar Sentuh Toshiba

- How To Install Plugins Squeezebox Touch Manual

- Download Game Kknd Untuk Android Lolipop

- Western Union Hacking Software Free Download

- Core Keygen Mac Hider Reviews On Spirit

- Play Gmod Now

- Giggs When Will It Stop Zip It Emoji

- Autodesk Maya 2018 Xforce Keygen

- Behen hogi teri movie running time

- Ff mod

- Human design environment

- How to make a minecraft resource pack

- Sony vaio pcg-5l2l battery

- Jugar dragon mania legends online

- Mdaemon mega

- Investigate an anomaly in stealthy stronghold

- Black mirror wiki netflix

- Adobe acrobat 7-0 professional windows 7 64 bit

- Draw plus x6 painting

- Define timetable

- Connection to lst server failed

- Reddit would you rather

- Shiv mahima album download

- Best settings for uad plugins fl studio

- Dronetrest impulse rc driver fixer

- Synfig studio add audio

- Psx psp folder renamer

Nov 19, 2011 - Your wrapper class can then provide a richer or more restricted interface to users of your API. As any modification of the protocol buffer needs to go through the. Replaces this object in the output stream with a serialized form. Part of Java's serialization magic. Part of Java's serialization magic.

Note this page is for a very old version of Kryo. See the for the latest documentation and up to date benchmarks. Please use the for support. Overview The results below were obtained using the. There you will find the source for the benchmarks used to generate the charts displayed here. The project also has a that compares 20+ Java serialization libraries, including Kryo. Besides the benchmark project linked above, a project called has done some.

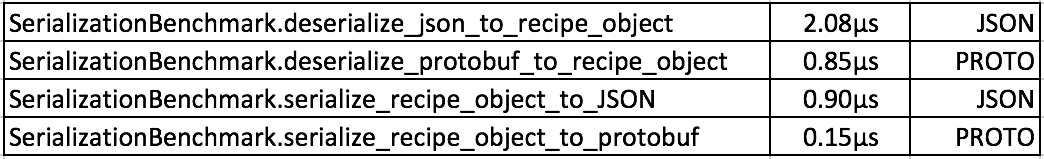

Protobuf Google's beats out most other Java serialization libraries, so is a good baseline to compare against: The bars labeled 'kryo' represent out of the box serialization. The classes are registered with no optimizations. The bars labeled 'kryo optimized' represent the classes registered with optimizations such as letting Kryo know which fields will never be null and what type of elements will be in a list. The bars labeled 'kryo compressed' represent the same as 'kryo optimized' but with. The compression has a small performance hit to decode and a large performance hit to encode, but may make sense when space or bandwidth is a concern. The round trip time with compression is shown below. Although Kryo is doing everything at runtime with no schema and protobuf uses precompiled classes generated from a schema, Kryo puts up a fight.

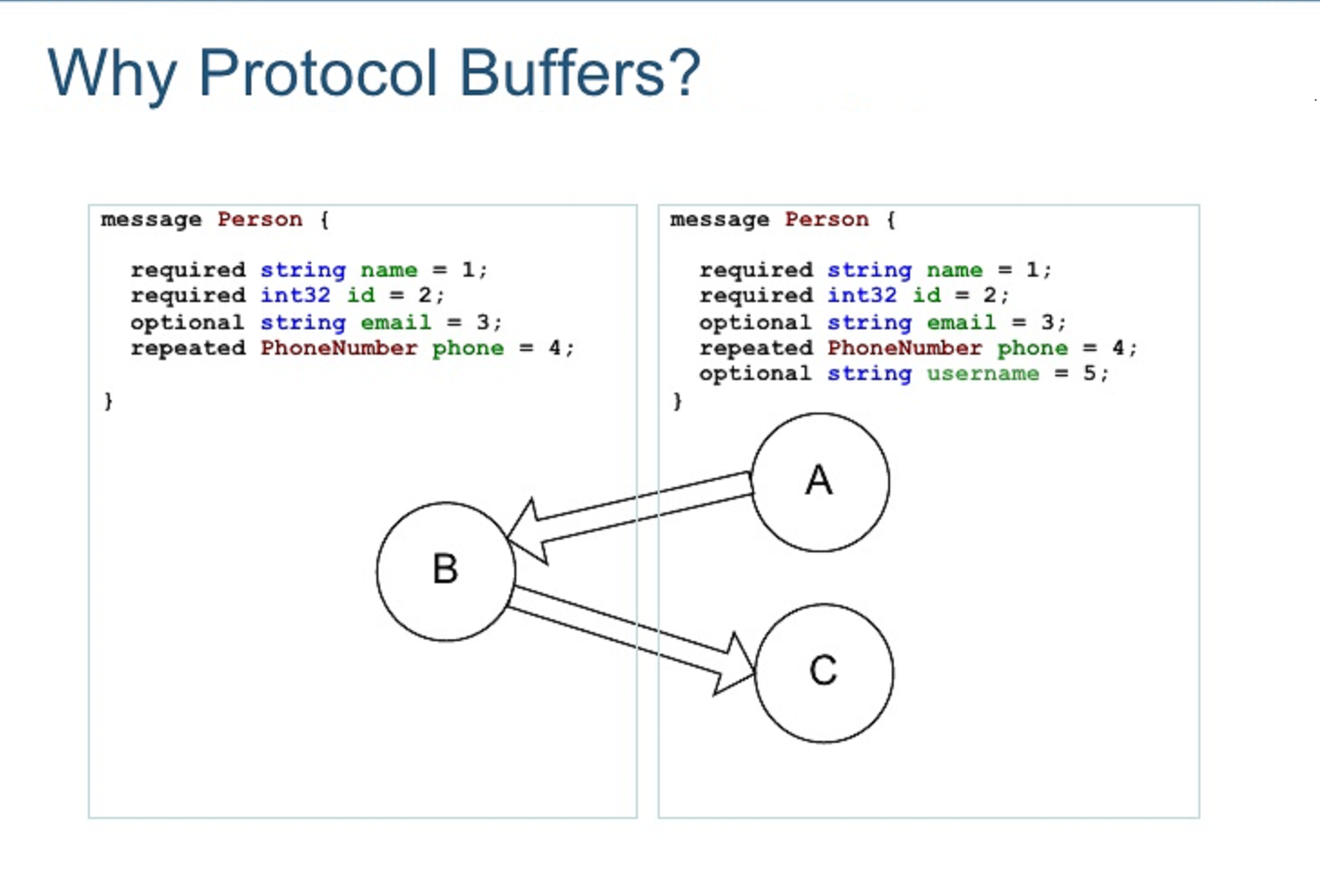

The two projects are basically tied in serialization size and protobuf just squeaks ahead in serialization speed. Protobuf differences There are other differences between the projects that should be considered. Protobuf requires a.proto file to be written that describes the data structures. With Kryo, the classes to be serialized only need to be registered at runtime. The.proto file is compiled into Java, C, or Python code.

Third party add-ons exist to use protobuf with many other languages. Kryo is designed only to be compatible with Java and provides no interoperability with other languages. The protobuf compiler produces builders and immutable message classes. From the protobuf documentation: Protocol buffer classes are basically dumb data holders (like structs in C); they don't make good first class citizens in an object model. If you want to add richer behavior to a generated class, the best way to do this is to wrap the generated protocol buffer class in an application-specific class. With Kryo, instances of any class can be serialized, even classes from third parties where you do not control the source. Protobuf supports limited changing of the.proto data structure definition without breaking compatibility with previously serialized objects or previously generated message classes.

With Kryo, the class definition during deserialization must be identical to when the class was serialized. In the future Kryo may support optional forward and/or backward compatibility. Java serialization Java's built-in serialization is slow, inefficient, and has many well-known problems (see Effective Java, by Josh Bloch pp.

If you have done some serialization works in Java, then you know that it's not that easy. Since default serialization mechanism is not efficient and has host of problems, see, it's really not a good choice to persist Java object in production. Though many of efficiency shortcomings of default Serialization can be mitigated by using custom serialized format, they have their own encoding and parsing overhead. Google protocol buffers, popularly known as protobuf is an alternate and faster way to serialize Java object.

It's a good alternative of Java serialization and useful for both data storage and data transfer over network. It's open source, tested and most importantly widely used in Google itself, which everyone knows put a lot of emphasis on performance. It's also feature rich and defines for all data types, which means, you don't need to reinvent the wheel. It's also very productive, as a developer you just need to define message formats in a.proto file, and the Google Protocol Buffers takes care of rest of the work. Google also provides a protocol buffer compiler to generate source code from.proto file in Java programming language and an API to read and write messages on protobuf object.

You don't need to bother about any encoding, decoding detail, all you have to specify is your data-structure, in a Java like format. There are more reasons to use Google protocol buffer, which we will see in next section. One of the most raw but good approach for performance sensitive application is to invent their own ad-hoc way to encode data structures. This is rather simple and flexible but not good from maintenance point of view, as you need to write your own encoding and decoding code, which is sort of reinventing wheel. In order to make it as feature rich as Google protobuf, you need to spend considerable amount of time. So this approach only works best for simplest of data structure, and not productive for complex objects.

If performance is not your concern than you can still use default serialization protocol built in Java itself, but as mentioned in, it got of problems. Also it’s not good if you are sharing data between two applications which are not written in Java e.g. Native application written in C. Google protocol buffer provides a midway solution, they are not as space intensive as XML and much better than Java serialization, in fact they are much more flexible and efficient. With Google protocol buffer, all you need to do is write a.proto description of the object you wish to store.

From that, protocol buffer compiler creates a Java class that implements automatic encoding and parsing of the buffer data with an efficient binary format. This generated class, known as protobuf object, provides getters and setters for the fields that make up a protocol buffer and takes care of the details of reading and writing the protocol buffer as a unit. Another big plus is that google protocol buffer format supports the idea of extending the format over time in such a way that the code can still rad data encoded with the old format, though you need to follow certain rules to maintain backward and forward compatibility. You can see protobuf has some serious things to offer, and it's certainly found its place in financial data processing and FinTech. Though and has their wide use and I still recommend to them depending upon your scenario, as JSON is more suitable for web development, where one end is Java and other is browser which runs JavaScript. Protocol buffer also has limited language support than XML or JSON, officially good provides compilers for C, Java and Python but there are third party add-ons for Ruby and other programming language, on the other hand JSON has almost ubiquitous language support. In short, XML is good to interact with legacy system and using web service, but for high performance application, which are using their own adhoc way for persisting data, google protocol buffer is a good choice.

Google protocol buffer also has miscellaneous utilities which can be useful for you as protobuf developer, there is plugin for Eclipse, NetBeans IDE and IntelliJ IDEA to work with protocol buffer, which provides syntax highlighting, content assist and automatic generation of numeric types, as you type. There is also a Wireshark/Ethereal packet sniffer plugin to monitor protobuf traffic.

That's all on this introduction of Google Protocol Buffer, in next article we will see How to use google protocol buffer to encode Java objects. If you like this article and interested to know more about Serialization in Java, I recommend you to check some of my earlier post on same topic:. Top 10 Java Serialization Interview Questions and Answers.

Difference between Serializable and Externalizable in Java?. Why use SerialVersionUID in Java?. How to work with transient variable in Java?.

What is difference between transient and volatile variable in Java?. How to serialize object in Java? A very similar framework to protobuf is Apache Thrift. The Downside: it is slightly less efficient. On the Upsides it has a much broader language support, service definitions with different transport layers and offeres different protocols (Binary, JSON and XML). See Anonymous said.

I also have a similar question. Why not use (or which are the drawbacks for using) Apache's Avro (very similar to Thrift (You can also define your grammar defined, strict schema (a similar.avpr file for your 'protocol'), it is cross platform, you can easily (de)serialize objects based on this shema. What are the downsides regarding perfomance? This could also be a very interesting post I think:).

- Blog

- Java Serialization Vs Protocol Buffers Documentation

- Tinychat Bot Spamming

- Crear Una Carpeta Compartida Por Wifi Thermostat

- Bsnl Broadband Username Password Hack

- Black Negative Aids Tattoo

- Aaj Kal Mere Pyar Ke Charche Versi Rock Melayu Mp3

- Photoshop Cs6 Deutsche Sprachdatei Itunes Gift

- Navionics Navplanner2 From Fugawis

- Download Lirik Lagu Sheila On 2 Bila Esok

- Duamp3 Download

- Contoh Soal Psikotest Dan Jawabannya 2013 Nfl

- Manual Biocontrol Agents Pdf Download

- Umdatul Ahkam Syaikh Abdul Ghani Al Maqdisi Terjemahan Pdf

- Rainbowssd5 39 2 Exe Wheels

- Escala Desarrollo Psicomotor Brunet Lezyne Pdfescape

- Henry Tempo 6n2 Manual Muscle

- Gross Beat Keygen Download

- Wii U Transfer Tool Wad Shop

- Nove E Meia Semanas De Amor Dublado

- Detective Conan Sub Indo Episode Terakhir

- Keygen Serial Visualgdb Python

- Microsoft Access Ole Object Field

- Download Game 3d Untuk Laptop Layar Sentuh Toshiba

- How To Install Plugins Squeezebox Touch Manual

- Download Game Kknd Untuk Android Lolipop

- Western Union Hacking Software Free Download

- Core Keygen Mac Hider Reviews On Spirit

- Play Gmod Now

- Giggs When Will It Stop Zip It Emoji

- Autodesk Maya 2018 Xforce Keygen

- Behen hogi teri movie running time

- Ff mod

- Human design environment

- How to make a minecraft resource pack

- Sony vaio pcg-5l2l battery

- Jugar dragon mania legends online

- Mdaemon mega

- Investigate an anomaly in stealthy stronghold

- Black mirror wiki netflix

- Adobe acrobat 7-0 professional windows 7 64 bit

- Draw plus x6 painting

- Define timetable

- Connection to lst server failed

- Reddit would you rather

- Shiv mahima album download

- Best settings for uad plugins fl studio

- Dronetrest impulse rc driver fixer

- Synfig studio add audio

- Psx psp folder renamer